Effective date: Sep 1, 2020

Why Silo?

Silo was built around one simple idea: Own Yourself.

We are on a mission to empower individuals to own and control their data across the systems they use every day.

What problem is Silo solving today?

Artificial intelligence today has no persistent, interoperable memory layer. Models are stateless by design, context gets fragmented across surfaces, and users lose control of their own data and context continuity.

Silo is building the user-owned memory and custody layer for AI — a neutral control plane that preserves context, continuity, and governance across AI systems and model boundaries.

Unlike applications that store bits of information, Silo’s memory layer is:

- Persistent — memory survives across sessions and models

- Model-agnostic — the same memory can be used by any model

- User-owned — users control consent, retention, and revocation

- Neutral — not owned or controlled by any single platform or model provider

This creates a missing layer in the AI stack that enables long-term continuity and platform interoperability.

Why does this opportunity exist now?

The AI ecosystem is rapidly evolving:

- Model quality is commoditizing — the model isn’t the differentiator anymore

- The real value is in context and continuity across uses and clouds

- Memory silos are forming around apps and platforms, fragmenting user experience

There is no existing neutral, user-controlled memory layer that can:

- Be shared across AI tools

- Preserve history without vendor lock-in

- Respect consent and governance requirements

Silo is positioned to become that layer.

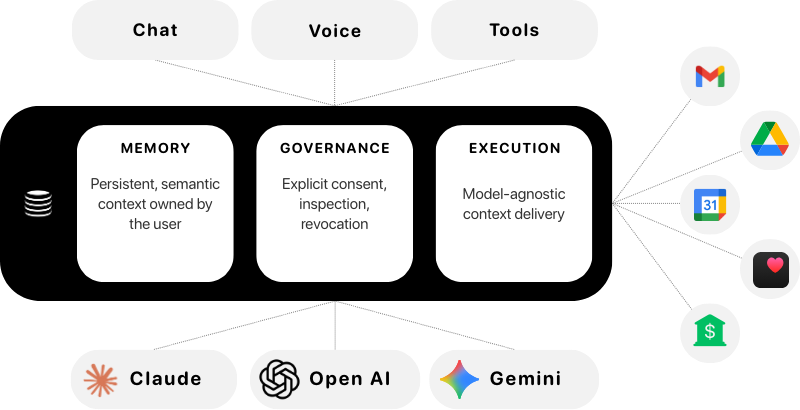

Silo’s Architecture (high-level)

Silo governs how personal context is accessed and shared.

Silo is not just an app — it’s an infrastructure component with three logical layers:

1. Memory Storage Layer

- A semantic, persistent store of user context

- Indexed and retrievable independent of any surface

2. Governance & Consent Layer

- Explicit consent management

- Control over retention, erasure, inspection, and revocation

3. Execution & Context Delivery

- Efficient delivery of context to any model

- Model-agnostic APIs and adapters

Surfaces like chat apps, assistants, and tools are clients of this layer, not owners of the memory.

Why this is hard

Building a memory/control plane for AI requires solving fundamental challenges:

- Neutrality — must not become another silo

- Governance — explicit user consent, revocation, compliance

- Model agnosticism — memory must work across model architectures

- Performance — contextual delivery without latency or leakage

These are architectural challenges, not product features.

What Silo enables long-term

Silo is progressing in stages:

- Launch and memory persistence foundation

- Governance and consent controls

- Model-agnostic context APIs

- Ecosystem partnerships and developer enablement

- Enterprise and regulated workflows

Each stage unlocks structural dependency on Silo over time.

Investment thesis

We are currently engaging select long-arc investors to support building the foundational memory and governance layer for AI. This includes but is not limited to:

- Build memory layer primitives

- Establish neutral governance mechanisms

- Expand integrations with AI models and surfaces

We are prioritizing long-arc alignment and architectural clarity over short-term growth metrics.

How the Silo memory layer contrasts with existing approaches

Silo is not:

- Just another privacy app

- A branded AI assistant

- A data store tied to one compute provider

Silo is:

- A control plane

- A memory layer independent of compute

- A trusted, user-governed layer

This places Silo between models and surfaces — a unique and defensible position.

For more information, please reach out to info@onesilo.com or request access to our investor deck.